Menu:

Behrooz Parhami's Files and Documents

Page last updated on 2021 January 09

This page originally contained links to Professor Parhami's selected publication reprints and other documents, including portions of the instructor's manuals and presentation material for his textbooks. Downloadable copies of Professor Parhami's peer-reviewed publications and textbook-related material can now be obtained through the following pages:

List of Publications

Teaching and Textbooks

Complete lists of original writings (other than technical papers provided in the list of publications), published critical reviews, technical translations, and reports are available in various appendices to B. Parhami's CV. What remains here includes miscellaneous publications and other creative work, presented in the following categories:

Articles and Reports

Letters and Other Writings

(Attempts at) Humor

Poetry Page

Articles and Reports

Message on the Occasion of the 200th Issue of Computer Report (Computer Report, No. 200, 2014)

Report of Frontiers-95 Panel Session on SIMD Machines

Letters and Other Writings

Too Early for Verdict on MOOCs (Letter to Communications of the ACM, July 2013)

Proper Formatting of Tables with Numerical Data (Letter to IEEE Spectrum, Oct. 2009)

Please Communicate in Concise, Simple Language (Letter to Daily Nexus, Oct. 2007)

How to Welcome Uninvited Guests (Letter to Communications of the ACM, Sep. 2006)

An Inappropriate Forum for Public Health Theories (Letter to IEEE Computer, Dec. 2003)

The Quality of Online Registration is Less than Gold (Letter to Daily Nexus, Jan. 2003)

What is Engineering? What Does an Engineer Do? (Definitions by B. Parhami)

Does Greed Influence the Computer Industry? (Letter to IEEE Computer, Apr. 2000)

Reinventing the Mousetrap (Letter to IEEE Micro, Mar.-Apr. 2000)

Speedup versus Profitup (Letter to IEEE Concurrency, Jan.-Mar. 2000); includes a rebuttal

The Right Acronym at the Right Time (Letter to IEEE Computer, June 1995)

The Opposite of Common Sense (Letter to IEEE Computer, Jan. 1989)

Letter to the Editor: Too Early for Verdict on MOOCs

(Published in Communications of the ACM, Vol. 56, No. 7, p. 8, July 2013)

Michael A. Cusumano's Viewpoint "Are the Costs of 'Free' Too High in Online Education?" (Apr. 2013) gave an essentially positive answer despite hedging with phrases like, "Maybe, but maybe not." It was clear from the context which side he is on, saying, for example, "The industries I follow closely are still struggling to recover from the impact of free." Comparing traditional institutions of higher learning with online course offerings is somewhat unfair, as it involves contrasting institutions that have undergone decades or even centuries of refinement with a new paradigm still in its infancy. Moreover, face-to-face interaction in a classroom is wonderful under ideal conditions, but universities in much of the world simply lack ideal conditions today; for example, face-to-face interaction is unlikely when a disengaged professor teaches hundreds of students in a large lecture hall where those tudents have difficulty even seeing the professor or projected slides or reading the scribbles on the board and are often in uncomfortable seats or no seat at all in the case of overenrolled classes. Students sometimes forego courses they prefer in favor of available ones and might wait weeks before receiving feedback on homework submissions or exam papers because the professor is too busy or there are too few teaching assistants. Online courses have been successful in part because they can provide immediate feedback and facilitate peer-to-peer interaction, as well as flexibility and variety. Institutions of higher learning must adapt to this wonderful new resource, and not take a defensive stance, insisting on business as usual.

Behrooz Parhami, Santa Barbara, CA

(Return to index for letters and other writings)

Letter to the Editor: Proper Formatting of Tables with Numerical Data

(Not published by IEEE Spectrum; submitted 12 Oct. 2009)

I am dismayed by the formatting of the table that appears near the middle of p. 28 of the October 2009 issue of IEEE Spectrum, as part of the article entitled "A Real Cloud Computer." In academia, we spend a great deal of energy on teaching our students to follow scientific and engineering norms in presenting data in graphic or tabular form. As a leading technical magazine, IEEE Spectrum should set examples for these best practices. Columns of numbers should always be aligned at their right end or at their decimal points, when present. So, for example, the numbers 179 and 3 in the rightmost column, with the digit 3 appearing under 1, are incorrectly formatted. Furthermore, every effort should be made to use the same measurement unit for an entire column, to facilitate comparison. So, the number 500 MHz should have been presented as 0.5 GHz underneath 2.8 GHz, with the common unit moved to the column's heading.

Behrooz Parhami, Professor of Electrical and Computer Engineering

(Return to index for letters and other writings)

Letter to the Editor: Please Communicate with the University Community in Concise, Simple Language

(Not published by Daily Nexus, because the item in question was a paid ad; submitted 1 Oct. 2007)

I was dumbstruck by a full-page notice, entitled "Rolling Stock," on page 8 of the October 1, 2007, issue of the Daily Nexus. Does anyone think that busy students, staff, and faculty would be inclined to read an entire page of text, set in small font? Isn't it a principle of effective communication to highlight the main points of such a notice to ensure better understanding and to persuade readers that the rest of the content is indeed important?

I would have used the following statement, in large font, at the top of the notice: "Expect to be cited if you engage in any act that endangers the safety of pedestrians and other campus denizens; this includes riding a bike or driving a car on campus walkways. For details, read the rest of this notice."

By the way, in a document that appears to have been written by and for attorneys, item 005, that advises against biking on walkways adjacent to bike paths, and item 009, that prohibits biking on walkways altogether, is quite amusing.

Behrooz Parhami, Professor of Electrical and Computer Engineering

(Return to index for letters and other writings)

Letter to CACM "Forum": How to Welcome Uninvited Guests

Communications of the ACM, Vol. 49, No. 9, pp. 12-13, September 2006

Peter J. Denning's "The Profession of IT" column ("Infoglut," July 2006) began with a thorough assessment of the information-overload problem, but its later exploration of related solutions was seriously off the mark. Denning's advocacy of, for example, using a "smart push" approach would solve, at best, only one aspect of the problem, not even the most serious one. If you find that too many people have been coming to your house uninvited and that some of them are stealing your property, would you post a list of "desirable guests" at the door as a reasonable approach to dealing with the problem?

Behrooz Parhami, Santa Barbara, CA

Denning's response included, in part: "Although I cited [the spam, adware, and malware] problem in the column, in my enthusiasm I jumped immediately to a less-studied area that might be called 'friendly-fire infoglut.' ... I apologize to my readers for whom this transition was too abrupt."

BP's reaction to Denning's response: Abruptness of transition wasn't the issue. My objection was to looking for our keys under a lamppost, when we know we lost them somewhere else.

(Return to index for letters and other writings)

Letter to the Editor: Challenging a Theoretic Discussion

(Submitted title: An Inappropriate Forum for Public Health Theories)

IEEE Computer, Vol. 36, No. 12, p. 8, December 2003

I am writing to criticize the decision to publish "Fighting Epidemics in the Information and Knowledge Age" by Hai Zhuge and Xiaoqing Shi (The Profession, Oct. 2003, pp. 116, 114-115).

Citing their own simulation studies, the authors advance, and defend, a theory in the realm of public health: that isolation control measures had no significant effect on containing the SARS epidemic. The rest of the article reinforces their claim that exchange of information, rather than isolation, was the important factor in the eventual containment.

Discussion of this particular type of theory does not belong in a publication whose readers, and reviewers, are not experts in public health and have no way of validating the authors' claims or the correctness of their model. The authors do not cite any opposing view or theory, except for stating that their conclusions are "counterintuitive to the thinking of many people and some governments." It seems appropriate that any theory of this sort be debated and peer-reviewed by public health researchers, not by computer professionals.

A particular contradiction in the article is noteworthy. The statement that "People will adopt intelligent behavior to avoid infection ..." renders the analogy between e-viruses and biological viruses less then compelling. In fact, the best defense against e-viruses appears to be isolation control measures -- firewalls, virus filters, discarding unsolicited attachments, and so on.

Information technology affects all aspects of modern society. The Web is a useful tool for everyone. This, however, does not provide a license for indiscriminate publication of any claim that is purportedly based on computer modeling. A balanced review of how computers are used in the public health arena would, of course, be quite useful to your readers.

Behrooz Parhami, Santa Barbara, California

Letter to the Editor: The Quality of Online Registration is Less than Gold*

Daily Nexus (UCSB student newspaper), Vol. 83, No. 52, p. 6, January 9, 2003

I have been teaching at UCSB for 14 years, yet I never had any reason to look at our registration system until my older son enrolled as a freshman this past fall. Having witnessed my son use the Web-based registration system twice, I am appalled by how it violates numerous rules of good user interface design that we routinely teach in our computer science and engineering courses.

To sign up for a chemistry lab that has tens of sections, a student must enter the enrollment code for each section in turn, until she or he finds one that is open. The one that is open may conflict with a previously chosen course, leading to the need for several iterations, all of these while worrying about the connection timing out.

Imagine a person wanting to fly from Los Angeles to New York being required to look up flight codes in a book and then given a limited time to enter dozens of flight codes, one by one, until an open flight is found!

Why can't a student enter the course number and his or her preferred times, with the computerized system listing all open sections in order of proximity to the desired time? One can go even further and expect that the student be able to enter a list of courses needed, with the machine generating feasible full or partial schedules and presenting them in graphical form.

It seems that the requirement for entering enrollment codes is a leftover from the days of registration by telephone when the user interface consisted of the very limited keypad input and voice output.

Next time my students come up with awkward user interfaces in their assignments or projects, I will be less inclined to judge them harshly; after all, students do learn by example!

Behrooz Parhami

* "Gold" refers to the UCSB registration system.

(Return to index for letters and other writings)

What is Engineering? What Does an Engineer Do?

Professor Parhami's attempt to define engineering and the role of an engineer.

"Engineering is the art of applying scientific knowledge in constructing artifacts or processes that serve useful functions and that also satisfy practical requirements in terms of cost, usability, and reliability. An engineer is basically a link between scientists and ordinary people. She or he adapts scientific theories for everyday use and also formulates societal needs and problems in a way that motivates scientists to study those problems and develop relevant theories for them. Increasingly, engineers (especially those with advanced degrees) also act as scientists in developing and testing new theories themselves. This type of engineer is sometimes indistinguishable from an applied scientist."

(Return to index for letters and other writings)

Letter to the Editor: Does Greed Influence the Computer Industry? [Letter, as published]

IEEE Computer, Vol. 33, No. 4, p. 4, April 2000

Does a professional engineering society exist to help practicing engineers fulfill their technical and social responsibilities? Or is the role of such a society identification of the next marketing opportunity for corporations? To me, an engineer must be concerned with developing useful, usable, reliable products. If such products make a fortune for the entity that markets them and, perhaps, for the engineer, that is perfectly fine.

However, I see that the engineering process is increasingly presented in reverse order: Identify the floating dollars first and then think of some product or service to help win those dollars. Don't worry if the product doesn't fulfill any real need; marketing will take care of that.

This seems to be the main message, for example, in Ted Lewis's February Binary Critic column ("Tracking the 'Anywhere, Anytime' Inflection Point," pp. 136, 134-135), where he notes: "Once wired, mobile consumers offer endless moneymaking possibilities. ... Before this wealth potential can be realized, however, all these vehicles must be equipped with Internet access."

As an engineering educator, I am extremely alarmed to note the trend toward lesser technical content and increased marketing hype in Computer and other "technical" publications. A socially responsible engineer should go from problem, to solution, to product or process, to market. It is perhaps appropriate for the Wall Street Journal or Forbes to go in the reverse direction, but certainly not for Computer.

Behrooz Parhami, University of California, Santa Barbara

(Return to index for letters and other writings)

Letter to the Editor: Reinventing the Mousetrap

IEEE Micro, Vol. 20, No. 2, p. 4, March-April 2000

Unlike most other engineering disciplines, in some areas of computer architecture research, we do not design a better mousetrap by building upon previous ideas; we keep reinventing the mousetrap. If the mice get fatter, we just reinvent bigger mousetraps, never even thinking about cutting off the food supply. We also do not bother to ask if a mousetrap is really the best mechanism to eradicate our problems.

In the early 1970s, my fellow graduate students and I were told that data transfers between the main memory and the processor, and between primary and secondary memories, impede performance by making the processor needlessly busy. We were naturally quite impressed with associative (intelligent?) memories and logic-per-track (active?) disks that had been proposed as potential solutions to these ills.

Similar solutions, under different names, seem to resurface every decade or so. Apparently, just like their 1, 10, and 100 MHz predecessors, today's GHz, deeply pipelined, multiple-issue processors are becoming too busy and must be relieved of some of their duties. Typically, the references in research papers advancing these solutions go back only five to six years (that's three to four Moore's law generations), so no connection is made with the previous cycle of ideas and solutions, which are some seven generations old!

The latest round in these reinventions calls for intelligence in memories, activeness in disks, and smartness in access cards. Designers of bloated operating systems and application programs, with layer upon layer of complexity and hordes of dubious "features," need not be concerned, however. There is no real danger that before long, even the memory, disk, and access cards will be declared too busy to do their tasks and some overzealous researcher advocates the use of intelligent programmers, active designers, or smart users to ease their burdens.

If history is any indication, such requirements will stay within the hardware boundary. Soon, someone will say that there is too much duplication in logic or storage and that signal propagation delays between intelligent/active/smart whatever and the CPU are impeding performance. Then, processor, main memory, and a tiny magnetic disk will be integrated onto a single microchip. Before long, this chip will become too busy, and the cycle will restart (albeit with new buzzwords), without the call for intelligence, activeness, or smartness ever extending outside the hardware realm.

Behrooz Parhami, University of California, Santa Barbara

(Return to index for letters and other writings)

Letter to the Editor: Speedup versus Profitup

IEEE Concurrency, Vol. 8, No. 1, pp. 3-4, January-March 2000

(see also the unpublished follow-up letter below)

I read with interest Yong Yan and Xiaodong Zhang's article on profit-effective parallel computing (IEEE Concurrency, April-June 1999, pp. 65-69). The good news is that the authors successfully argue for profit-effectiveness as a natural step beyond cost-effectiveness. Some parallel solutions to a given problem that are deemed cost-ineffective may be profit-effective if the resultant nonideal speedup somehow translates to superlinear monetary gains (production value) -- hence, their pitch that you should use "profitup" rather than "speedup" to compare various alternative solutions.

Now the bad news. As scientists and engineers, how concerned should we be with corporate profits and how far should we take the notion of a single metric encompassing all that is good about a system? Should we look past profits earned by one corporation to the solution's worth to a nation, the global economy, or the well-being of the human race? In the past, many researchers have rightfully criticized attempts to provide a single number as an indicator of performance (let alone cost-effectiveness and, now, profit-effectiveness). The counterargument goes that such metrics provide only starting points or zeroth-level approximations and that they might indeed be valuable if used with caution.

I would like to argue that computer scientists and engineers must draw the line at performance, usability, and reliability. The furthest we should go is to consider cost, which is a valid engineering concern and affects usability. However, even at this level, the engineer's notion of cost is far removed from the corporate model which, for example, might include training, advertising, and potential litigation costs when a new nonstandard product or process is introduced. Profits, competitive advantage (in other words, future profits), and social benefits should not enter our formulas, as they are even more difficult to factor in than cost. Leave the modeling of financial aspects to accountants and marketing people and the broader human aspects to social scientists.

Consider profits for example. In addition to sales receipts, minus expenditures, there are other profits (and losses) to consider. Fulfilling client expectations, looking "cool" in the marketplace, and moving in the direction of long-term goals are examples of less tangible or longer-term gains. Diverting attention from other (more profitable) products, fueling unreasonable expectations, and creating maintenance and support headaches exemplify longer-term losses. There is no way all of these can be quantified -- let alone incorporated into a single formula -- so let's not even try.

I have nothing against profits and do not deny their role in motivating technical innovation. However, worrying about profits should not be part of the scientist's or engineer's routine. If we start including profits in our formulas, even assuming that logarithms and square roots can capture the complex market parameters, we will be inclined to work on ideas and gadgets based on (perceived or real) profit potentials rather than on usefulness and technical merit. To see the danger, ask any engineer if he wants his doctor to recommend treatments that maximize the insurance company's profits.

It is fine to ask an engineer to design a computer that weighs 1 kg, can survive a 1-meter fall onto a hardwood floor, is aimed at young kids ages three to seven, and would sell for $100 in 2001. It is not okay to ask her to create a product that increases the company's market share and doubles its stock prices by 2001 (even though the aforementioned computer might be just such a product). In the first case, the problem is within the engineer's domain of expertise and satisfies her professional commitment to serve the society through the development of useful products and processes. In the second case, the specs do not include anything that is of technical or social value.

Though not directly relevant to Yan and Zhang's article, I might as well take this opportunity to also fume over a related pet peeve of mine. A few days ago, I attended a well-publicized talk by the chief scientist of a leading Internet company. I expected to find out a lot of interesting technical details from this talk by a former academic, but I came back having learned only about targeted ads, the number of hits, staff size, and net worth of his company.

Where is the science? What happened to the engineering? Why do our 'technical' publications increasingly look like the Wall Street Journal and Forbes? Experiment for yourself. Take a recent issue of IEEE Spectrum or Computer. Go back 10 or 20 years and look at the corresponding issues then. See how the articles are being drained of technical content and instead filled with hype; look at the types of books reviewed; witness the news sections becoming dominated by mergers and acquisitions instead of technical innovations or breakthroughs.

Let's go back to science and engineering and leave the discussion of stocks, profits, and market shares to specialists in those areas.

Behrooz Parhami, Professor; University of California, Santa Barbara, USA

Follow-up letter

(not published due to magazine policy on avoiding continued correspondence)

To the editor:

I could not let Yong Yan and Xiaodong Zhang's reply to my letter ("Speedup versus profitup," IEEE Concurrency, January-March 2000, pp. 3-4) regarding their article on profit-effective parallel computing (April-June 1999, pp. 65-69) go unchallenged. In their reply, the authors explain their motivation and objectives:

"... computer science research should be directly motivated by its potential contributions to national and global economic growth and to our social and natural environment. ... We should encourage our PhD students to solve problems that are not only technically challenging but also potentially beneficial to society."

Completely agreeing with these statements and thinking that I might have unfairly criticized the authors' work, I went back and carefully reread their article. While the word "social" or "society" was nowhere to be found, there were 77 occurrences of "profit" and "profitup," excluding related terms such as "cost," "costup," and "dollar."

Behrooz Parhami, University of California, Santa Barbara

(Return to index for letters and other writings)

Letter to "The Open Channel": The Right Acronym at the Right Time

IEEE Computer, Vol. 28, No. 6, p. 120, June 1995

An unwritten law says that to become a recognized expert in computer architecture, first invent a four-letter acronym (more effective if the inventor dreams up groups of two or four acronyms at a time). CISC, DASD, PRAM, SIMD, VLIW, WARP -- we've got dozens of acronyms beginning practically with every letter in the alphabet. Extra points to the inventor if the acronym can be pronounced as a word, like MIPS or RISC. This inexplicable love of four-letter acronyms even extends to conferences: ICPP, IPPS, ISCA, and so on.

While reflecting recently on my 25 years of work in computer architecture, I realized that the pinnacle of my career was yet to be scaled -- I hadn't invented a four-letter acronym. Oh, I came close several times, with three- and five-letter acronyms, but they just didn't have the satisfying symmetry conveyed by the four-letter variety.

More than 20 years ago, I blew a golden opportunity when, after devising a four-way classification of associative processors based on the parallel or serial handling of words and bits within words, I failed to introduce the obvious: WPBP (word-parallel, bit-parallel), WPBS, WSBP, and WSBS. I've regretted the lapse ever since.

So, after thinking long and hard, I've finally made my contribution to the field. I've invented the communication counterpart of RISC: Scant-Interaction Network Cell (SINC*). SINC describes a network element that can perform simple routing control functions at very high speed. (How simple and how fast are matters to be decided through lengthy debates.) Its opposite, a Full-Interaction Network Cell, is a FINC.

Having established my credentials as a computer architect, I can now relax and enjoy the next 25 years!

Behrooz Parhami; University of California, Santa Barbara

* Some really great four-letter acronyms have already been taken. I started out with "Limited" instead of "Scant" but then discovered that Digital Equipment had used LINC in the early 1960s.

(Return to index for letters and other writings)

Letter to "The Open Channel": The Opposite of Common Sense

IEEE Computer, Vol. 22, No. 1, p. 98, January 1989

It has been suggested that expert systems must be equipped with common sense to be truly effective. This is not always true.

For example, an expert system for nuclear warfare will probably fail if equipped with common sense. After all, if the two sides had common sense, they wouldn't have started the arms race, let alone a nuclear war!

I don't know what the opposite of common sense is, but both "uncommon sense" and "common nonsense" seem appropriate for expert systems controlling nuclear warfare.

Behrooz Parhami; Carleton University, Ottawa, Canada

(Return to index for letters and other writings)

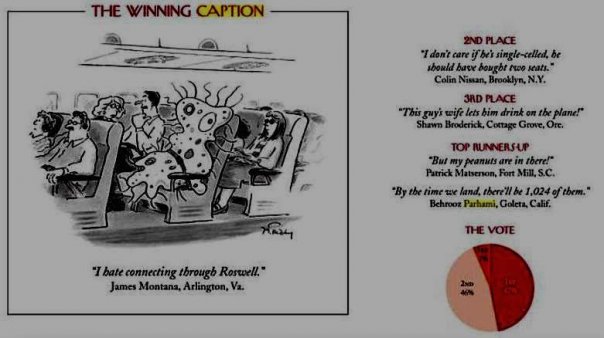

(Attempts at) Humor

Cartoon Caption Contest

Professor Parhami was delighted that a caption he submitted to The New Yorker cartoon caption contest on August 10, 2007, was judged to be among the top five. This proves once and for all that, despite his children's claims to the contrary, Professor Parhami does have a good sense of humor. The image on the right depicts page 177 of The New Yorker Cartoon Caption Contest Book: The Winners, the Losers, and Everybody in Between (2008).